EU Artificial Intelligence (AI) Act Compliance

The EU AI Act is a comprehensive regulatory framework that classifies AI systems by risk level, imposes

strict requirements on high-risk applications, and bans certain unacceptable

uses to ensure safety, transparency, and fundamental rights protection.

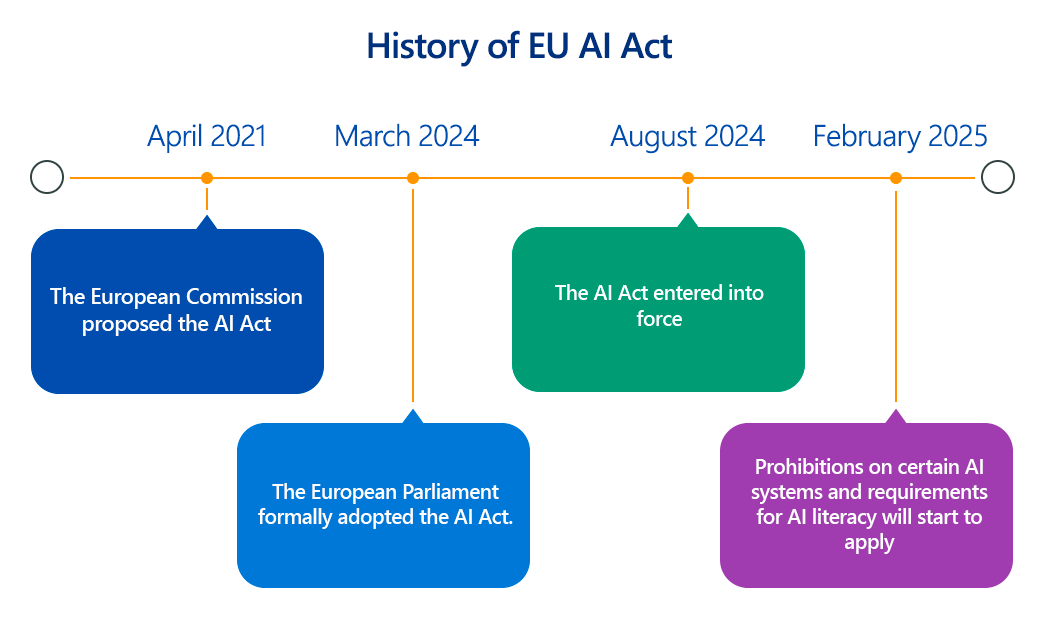

The European Union's Artificial Intelligence Act (EU AI Act), officially entered into force in August 2024 with staggered application dates, marks a pivotal moment in the global regulation of artificial intelligence.

As the world's first comprehensive legal framework for AI, it aims to foster trustworthy AI development and deployment by introducing a risk-based approach, ensuring that AI systems are safe, transparent, non-discriminatory, and accountable.

This article delves into the technical intricacies of the Act, exploring its key compliance aspects, the importance of adherence, and practical steps for organizations.

Overview of the EU AI Act

At its core, the EU AI Act categorizes AI systems into four distinct risk levels, each with varying degrees of regulatory obligations:

- Unacceptable Risk: These AI systems are outright banned in the EU due to their potential to pose a clear threat to fundamental rights. Examples include AI systems employing subliminal manipulation, social scoring by governments, real-time remote biometric identification in public spaces (with very limited exceptions for law enforcement), and the untargeted scraping of facial images for databases.

- High Risk: This category encompasses AI systems that can pose significant harm to health, safety, or fundamental rights. These systems are subject to stringent requirements before they can be placed on the market or put into service. High-risk AI systems include those used in critical infrastructure (e.g., transport, utilities), education (e.g., access to education, exam scoring), employment (e.g., CV sorting, worker management), essential public and private services (e.g., credit scoring, emergency dispatch), law enforcement, migration and border control, and the administration of justice.

- Limited Risk: AI systems in this category require specific transparency obligations to ensure users are aware they are interacting with an AI. This includes chatbots and systems that generate or manipulate content (e.g., deepfakes), which must be clearly labeled as AI-generated.

- Minimal or No Risk: The vast majority of AI systems fall into this category, such as AI-enabled spam filters or video games. These systems are largely unregulated under the Act, though providers are encouraged to adhere to voluntary codes of conduct.

The Act also introduces specific provisions for General-Purpose AI (GPAI) models, which are powerful foundation models capable of performing a wide range of tasks. If a GPAI model has "systemic risk" (e.g., computational training exceeding 1025 FLOPS or deemed high-impact by the European Commission), it faces additional scrutiny, including thorough evaluations and reporting of serious incidents.

Key Aspects of EU AI Act Compliance

For organizations developing or deploying AI systems, particularly high-risk ones, compliance involves a robust set of technical and organizational measures:

- Risk Management System: Providers of high-risk AI systems must establish, implement, document, and maintain a comprehensive risk management system throughout the AI system's lifecycle. This involves continuous identification, assessment, and mitigation of risks to health, safety, and fundamental rights.

- Data and Data Governance: A crucial technical aspect is ensuring the high quality of data used for training, validation, and testing of AI systems. Data sets must be relevant, representative, sufficiently complete, and, to the best extent possible, free of errors and biases. Robust data governance practices are essential to achieve data quality and integrity.

- Technical Documentation: Extensive and up-to-date technical documentation is mandatory before placing a high-risk AI system on the market. This documentation (detailed in Annex IV of the Act) must be clear, comprehensive, and provide all necessary information for authorities to assess compliance. It includes details on the system's architecture, development process, data used, security measures, and testing results (accuracy, robustness, cybersecurity).

- Record-Keeping and Logging: High-risk AI systems must automatically log events over their lifetime. These logs, along with other relevant documentation, must be retained for at least 10 years to ensure traceability and accountability, particularly in case of errors or incidents.

- Transparency and Provision of Information: Providers must ensure transparency about how their AI systems function. For high-risk systems, clear and adequate information must be provided to deployers (users) on the system's purpose, capabilities, limitations, and potential risks, enabling them to use it safely and effectively. For limited-risk systems, users must be explicitly informed when they are interacting with an AI.

- Human Oversight: High-risk AI systems must be designed to allow for appropriate human oversight. This means integrating mechanisms that ensure meaningful human involvement and control, preventing the AI system from overriding or misleading human decision-makers. Technical design should facilitate human intervention, monitoring, and the ability to interpret the system's outputs.

- Accuracy, Robustness, and Cybersecurity: High-risk AI systems must be designed and developed to achieve an appropriate level of accuracy, robustness, and cybersecurity. This includes the ability to withstand errors, failures, and malicious attacks, ensuring reliable and secure performance under normal and foreseeable conditions. Technical measures like input data validation, error handling, and robust cybersecurity safeguards are critical.

- Conformity Assessment and CE Marking: Before a high-risk AI system can be placed on the market, it must undergo a conformity assessment procedure, often involving a third-party notified body. Upon successful assessment, the provider must draw up an EU Declaration of Conformity and affix the CE marking to the AI system, indicating its compliance with the Act.

- Quality Management System (QMS): Providers of high-risk AI systems must establish and maintain a documented QMS that ensures compliance throughout the AI system's lifecycle, from design and development to deployment and post-market monitoring.

Why Is EU AI Act Compliance Important?

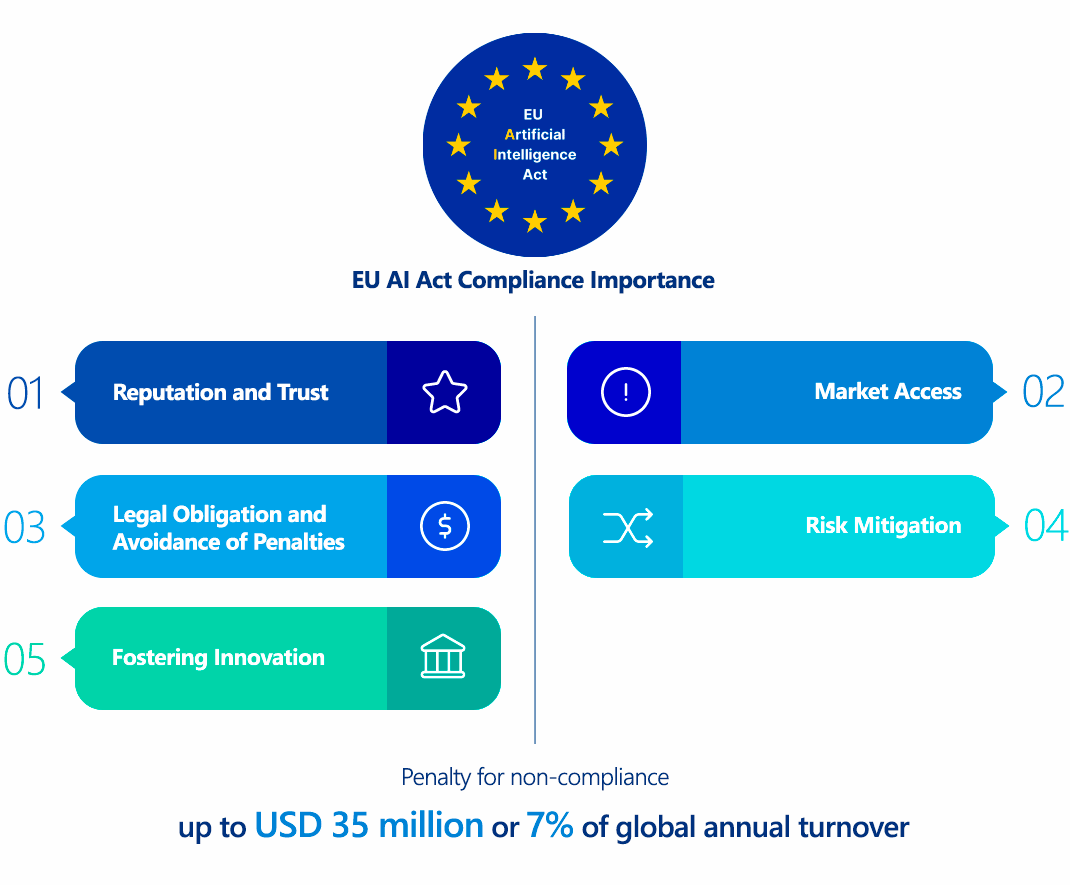

Compliance with the EU AI Act is crucial for several reasons:

- Legal Obligation and Penalties: The Act carries significant financial penalties for non-compliance, ranging up to €35 million or 7% of a company's global annual turnover for violations of banned AI practices, and €15 million or 3% for other non-compliance. Supplying incorrect information can lead to fines of up to €7.5 million or 1.5% of turnover.

- Market Access: For companies operating within or seeking to enter the EU market, compliance is a prerequisite. Non-compliant AI systems may be prohibited from being placed on the market or put into service in the EU.

- Reputation and Trust: Adhering to the Act demonstrates a commitment to ethical and responsible AI, building trust among users, partners, and the public. This can lead to a significant competitive advantage in a world increasingly wary of AI's potential downsides.

- Risk Mitigation: The Act's requirements, particularly for high-risk AI, are designed to mitigate potential harms associated with AI, such as discrimination, privacy violations, or safety failures. Proactive compliance reduces the likelihood of incidents, legal challenges, and reputational damage.

- Fostering Innovation: While imposing regulations, the Act also aims to create a framework that encourages responsible AI innovation by providing legal clarity and building public confidence in AI technologies.

Who Needs to Comply with the EU AI Act?

The EU AI Act has broad extraterritorial reach, meaning it applies to any organization that:

- Places AI systems on the market or puts them into service in the EU, regardless of where the organization is established.

- Is located within the EU and develops, deploys, or uses AI systems.

- Provides AI systems whose output is used in the EU, even if the provider is located outside the EU.

This includes providers (developers), deployers (users), importers, and distributors of AI systems. Specific obligations vary depending on the role an entity plays and the risk classification of the AI system.

EU AI Act vs. GDPR Comparison

While both the EU AI Act and GDPR (General Data Protection Regulation) are landmark EU regulations aimed at protecting fundamental rights in the digital age, they have distinct focuses:

| Feature | EU AI Act | GDPR |

|---|---|---|

| Primary Focus | Regulating the development, deployment, and use of Artificial Intelligence systems, particularly concerning safety and fundamental rights. | Protecting personal data and privacy, giving individuals more control over their personal information. |

| Scope | Applies to AI systems, regardless of whether they process personal data. Focuses on the AI system itself and its impact. | Applies to the processing of personal data, regardless of the technology used. Focuses on data subjects and their rights. |

| Risk Model | Risk-based approach to AI systems (unacceptable, high, limited, minimal). | Risk-based approach to data processing, focusing on the likelihood and severity of risks to individuals' rights and freedoms. |

| Technicalities | Focuses on AI-specific technical requirements: risk management, data quality for AI training, transparency of AI models, human oversight, robustness, accuracy, cybersecurity of AI systems. | Focuses on data protection principles: data minimization, purpose limitation, storage limitation, accuracy, integrity and confidentiality, and accountability through technical and organizational measures (e.g., encryption, pseudonymization, access controls). |

| Overlaps | High-risk AI systems often process personal data, creating significant overlaps. Compliance with AI Act data governance requirements (e.g., bias mitigation in training data) will often contribute to GDPR compliance. Similarly, GDPR's principles like data minimization and accuracy are crucial for ethical AI development. | AI Act implicitly relies on GDPR for data privacy aspects within AI systems. For instance, the quality of datasets for AI training must also comply with GDPR principles if personal data is involved. |

| Enforcement | Enforcement through national supervisory authorities and the European AI Board/Office. | Enforcement through national Data Protection Authorities (DPAs) and the European Data Protection Board (EDPB). |

In essence, the EU AI Act complements the GDPR. Organizations dealing with AI systems that process personal data will need to comply with both regulations, ensuring that their AI adheres to the specific technical and ethical requirements of the AI Act while also upholding the fundamental rights related to data protection as enshrined in the GDPR.

How to Ensure EU AI Act Compliance?

Achieving and maintaining EU AI Act compliance is a multi-faceted endeavor requiring a systematic approach:

- AI System Inventory and Classification:

- Technical Step: Conduct a comprehensive audit of all AI systems in use or under development within your organization.

- Technical Detail: For each system, meticulously map its functionality, intended purpose, and how it interacts with users or other systems. Then, apply the EU AI Act's risk classification framework (unacceptable, high, limited, minimal) to each identified AI system. This often involves detailed technical assessments of the AI model's capabilities, the data it processes, and its impact on individuals.

- Gap Analysis and Remediation Planning:

- Technical Step: Compare your current AI development and deployment practices against the specific requirements of the EU AI Act, especially for high-risk systems.

- Technical Detail: Identify technical and organizational gaps. This could involve assessing your data governance pipelines for training data quality (e.g., ensuring representativeness, identifying and mitigating biases), reviewing model explainability mechanisms, evaluating human-in-the-loop interfaces, and scrutinizing cybersecurity controls for AI infrastructure. Develop a detailed roadmap for addressing these gaps.

- Implement a Robust AI Governance Framework:

- Technical Step: Establish clear policies, procedures, and responsibilities for the entire AI lifecycle.

- Technical Detail: This includes defining roles for data scientists, engineers, legal teams, and compliance officers. Implement version control for AI models, secure data handling protocols for training and inference data, and robust change management processes for AI system updates. Integrate AI governance into existing enterprise governance, risk, and compliance (GRC) frameworks. ISO/IEC 42001 (AI Management System) can provide a structured approach.

- Technical Documentation and Record-Keeping:

- Technical Step: Develop and maintain comprehensive technical documentation as required by the Act.

- Technical Detail: This involves detailing the AI system's architecture, training methodology (including datasets, hyperparameters, and algorithms used), evaluation results (accuracy, robustness, fairness metrics), and cybersecurity measures. Implement automated logging systems to record the AI system's activities throughout its operational lifetime, capturing critical events, decisions, and system performance metrics.

- Data Quality and Bias Mitigation:

- Technical Step: Prioritize data quality and fairness in AI development.

- Technical Detail: Implement data governance practices to ensure training, validation, and testing datasets are relevant, representative, and minimize bias. This can involve employing techniques like data augmentation, adversarial debiasing, and robust statistical analysis to identify and mitigate biases in the data and the resulting AI model outputs. Regular audits of datasets and model performance for fairness are crucial.

- Human Oversight and Explainability:

- Technical Step: Design AI systems with human oversight mechanisms.

- Technical Detail: This means providing clear interfaces for human intervention, the ability to override AI decisions, and tools for understanding the AI's reasoning (explainable AI - XAI). Technical documentation should clearly explain the AI's limitations, potential failure modes, and how humans can effectively monitor and control it.

- Accuracy, Robustness, and Cybersecurity by Design:

- Technical Step: Embed these principles into the AI system's design and development.

- Technical Detail: Implement rigorous testing methodologies (e.g., stress testing, adversarial testing) to ensure the AI system's accuracy, robustness against unexpected inputs or adversarial attacks, and resilience to cyber threats. This includes securing the AI model itself, its deployment environment, and the data pipelines.

- Conformity Assessment and CE Marking Process:

- Technical Step: For high-risk AI systems, prepare for and undergo the required conformity assessment.

- Technical Detail: This involves compiling all necessary technical documentation, demonstrating the effectiveness of the risk management system, and proving adherence to all relevant requirements.63 Once conformity is assessed, apply the CE marking.

- Continuous Monitoring and Post-Market Surveillance:

- Technical Step: Establish systems for ongoing monitoring of AI systems in deployment.

- Technical Detail: This includes continuous performance monitoring, anomaly detection, and mechanisms for reporting and investigating serious incidents or unexpected behaviors. Implement feedback loops to update and refine AI models and their associated documentation based on real-world performance.

Consequences of Non-Compliance with the EU AI Act

The penalties for non-compliance are substantial and are tiered based on the severity of the violation:

- Violations of Prohibited AI Practices: Up to €35 million or 7% of the company's total worldwide annual turnover for the preceding financial year, whichever is higher.

- Non-Compliance with Other Obligations (e.g., high-risk AI requirements): Up to €15 million or 3% of the company's total worldwide annual turnover for the preceding financial year, whichever is higher.

- Supplying Incorrect, Incomplete, or Misleading Information to Notified Bodies or National Competent Authorities: Up to €7.5 million or 1.5% of the company's total worldwide annual turnover for the preceding financial year, whichever is higher.

Beyond financial penalties, non-compliance can lead to:

- Reputational Damage: Loss of trust from customers, partners, and the public.

- Market Exclusion: Prohibition from placing non-compliant AI systems on the EU market.

- Legal Action: Lawsuits from individuals or groups harmed by non-compliant AI systems.

- Operational Disruptions: Remediation efforts can be costly and time-consuming, disrupting business operations.

How ImmuniWeb Helps Comply with the EU AI Act

While ImmuniWeb is primarily known for its AI-powered cybersecurity and penetration testing platform, its capabilities can significantly contribute to an organization's EU AI Act compliance efforts, particularly in the critical areas of cybersecurity, robustness, data integrity, and documentation support for AI systems.

ImmuniWeb conducts deep API penetration testing, uncovering vulnerabilities like insecure endpoints, broken authentication, and data leaks, ensuring compliance with OWASP API Security Top 10.

Automated AI-driven scans detect misconfigurations, excessive permissions, and weak encryption in REST, SOAP, and GraphQL APIs, providing actionable remediation insights.

ImmuniWeb provides Application Penetration Testing services with our award-winning ImmuniWeb® On-Demand product.

The award-winning ImmuniWeb® AI Platform for Application Security Posture Management (ASPM) helps aggressively and continuously discover an organization's entire digital footprint, including hidden, unknown, and forgotten web applications, APIs, and mobile applications.

ImmuniWeb continuously discovers and monitors exposed IT assets (web apps, APIs, cloud services), reducing blind spots and preventing breaches via real-time risk scoring.

ImmuniWeb provides Automated Penetration Testing services with our award-winning ImmuniWeb® Continuous product.

Simulates advanced attacks on AWS, Azure, and GCP environments to identify misconfigurations, insecure IAM roles, and exposed storage, aligning with CIS benchmarks.

Automates detection of cloud misconfigurations, compliance gaps (e.g., PCI DSS, HIPAA), and shadow IT, offering remediation guidance for a resilient cloud infrastructure.

Combines AI-powered attack simulations with human expertise to test defenses 24/7, mimicking real-world adversaries without disrupting operations.

Runs automated attack scenarios to validate security controls, exposing weaknesses in networks, apps, and endpoints before attackers exploit them.

Provides ongoing, AI-augmented pentesting to identify new vulnerabilities post-deployment, ensuring proactive risk mitigation beyond one-time audits.

Prioritizes and remediates risks in real time by correlating threat intelligence with asset vulnerabilities, minimizing exploit windows.

Monitors dark web, paste sites, and hacker forums for stolen credentials, leaked data, and targeted threats, enabling preemptive action.

The award-winning ImmuniWeb® AI Platform for Data Security Posture Management helps continuously discover and monitor an organization's internet-facing digital assets, including web applications, APIs, cloud storage, and network services.

Scans underground markets for compromised employee/customer data, intellectual property, and fraud schemes, alerting organizations to breaches.

Tests iOS/Android apps for insecure data storage, reverse engineering risks, and API flaws, following OWASP Mobile Top 10 guidelines.

Automates static (SAST) and dynamic (DAST) analysis of mobile apps to detect vulnerabilities like hardcoded secrets or weak TLS configurations.

Identifies misconfigured firewalls, open ports, and weak protocols across on-premises and hybrid networks, hardening defenses.

Delivers scalable, subscription-based pentesting with detailed reporting and remediation tracking for agile security workflows.

Detects and expedites takedowns of phishing sites impersonating your brand, minimizing reputational damage and fraud losses.

Assesses vendors’ security posture (e.g., exposed APIs, outdated software) to prevent supply chain attacks and ensure compliance.

Simulates advanced persistent threats (APTs) tailored to your industry, testing detection/response capabilities against realistic attack chains.

Manual and automated tests uncover SQLi, XSS, and business logic flaws in web apps, aligned with OWASP Top 10 and regulatory standards.

Performs continuous DAST scans to detect vulnerabilities in real time, integrating with CI/CD pipelines for DevSecOps efficiency.

By leveraging ImmuniWeb's advanced security testing and management platform, organizations can significantly strengthen the technical security posture of their AI systems, directly addressing crucial aspects of the EU AI Act's compliance requirements, particularly for high-risk applications. While it's one piece of the puzzle, a robust cybersecurity framework provided by tools like ImmuniWeb is indispensable for comprehensive AI Act compliance.

Meet Regulatory Requirements with ImmuniWeb® AI Platform

ImmuniWeb can also help to comply with other data protection laws and regulations:

Europe

EU GDPR

EU DORA

EU NIS 2

EU Cyber Resilience Act

EU AI Act

EU ePrivacy Directive

UK GDPR

Swiss FADP

Swiss FINMA Circular 2023/1

North & South America

Middle East & Africa

Qatar Personal Data Privacy Protection Law

Saudi Arabia Personal Data Protection Law

Saudi Arabian Monetary Authority Cyber Security Framework (1.0)

South Africa Protection of Personal Information Act

UAE Information Assurance Regulation (1.1)

UAE Personal Data Protection Law

Asia Pacific

Australia Privacy Act

Hong Kong Personal Data Privacy Ordinance

India Digital Personal Data Protection Act

Japan Act on the Protection of Personal Information

Singapore Personal Data Protection Act